TL;DR

• AI is reshaping the SDLC end to end, moving from isolated tools to connected, agent-driven workflows

• Autonomous testing is emerging as part of this shift, not as a separate capability

• Adoption is real—but still early, with measurable gains for leading teams

• Trust in results becomes critical as AI takes on more responsibility

AI is moving beyond simple code assistance. In Autonomous Testing in Action: How Teams Are Using AI to Deliver Quality at Scale, guest speaker Forrester VP and Principal Analyst Diego Lo Giudice explored how we are entering the era of the Agentic SDLC—a shift from isolated AI copilots to coordinated AI agents that collaborate across the entire development lifecycle—and how this evolution is transforming quality at scale.

Understanding this shift helps explain why autonomous testing isn’t just a luxury but rather the logical evolution of a connected development system.

The Evolution: From TuringBots to Agentic AI

In 2021, Forrester introduced the concept of TuringBots—AI assistants infused into every stage of the SDLC. Today, that vision has matured. We are moving from Phase 1 (individual assistance) into Phase 2 and 3, where these bots become smarter, more collaborative, and increasingly autonomous.

“TuringBots are becoming agentic software development…the tester bot might talk to the code bot and say, ‘Hey, can you test this code I just generated?'”

— Diego Lo Giudice, Forrester VP and Principal Analyst

Testing as a Connected Workflow, Not a Phase

One of the strongest strategic points is that testing is no longer a downstream check. In an agentic model, testing is woven into the fabric of planning and delivery.

- Context Sharing: AI agents share requirements and design specs to inform test cases instantly.

- Triggered Autonomy: Code generation automatically triggers validation cycles.

- Cross-Lifecycle Intelligence: Insights from testing flow back into the backlog to prioritize fixes.

The Reality of Adoption: Progress in the “Hurricane”

While the vision is expansive, the current reality is a hybrid model. Testing and code generation are the top two use cases for AI today, but adoption follows a measured pattern.

According to Diego’s research on organizations using autonomous testing platforms:

- Automation Baseline: These teams automate roughly 51–60% of their tests.

- The AI Lift: AI contributes an additional 21–30% to that automation.

This suggests that while we are in the “center of the hurricane,” human oversight remains the essential bridge to full autonomy.

The Strategic Shift: Speed vs. Quality

As development accelerates, testing naturally feels the impact. With more code being generated faster than ever, the demand for testing has exploded. The goal isn’t just to “keep up,” but to ensure that speed doesn’t compromise the user experience.

Diego notes that as release cycles compress, testing becomes the primary defense for the enterprise. This is why the industry is moving toward Autonomous Testing Platforms—tools that can heal themselves, generate their own data, and maintain pace with rapid-fire code changes.

Building Trust Through Determinism

For AI to succeed in the enterprise, it must be trusted. This requires more than just “good” results; it requires transparency and reliability.

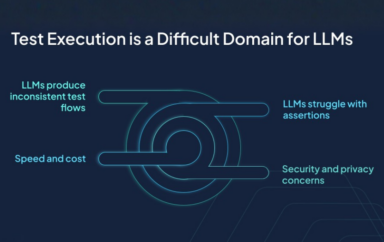

Applitools CEO Anand Sundaram noted that trust is built through deterministic models—AI that provides consistent, reproducible outcomes without “hallucinations.” When AI takes on more responsibility, the organization’s confidence in the tool’s output becomes the deciding factor for scaling.

What This Means for Engineering Leaders

The transition to an Agentic SDLC requires a mental shift in how teams are managed. For leaders, the focus should be on three key areas:

- Prioritize Context Engineering: AI is only as good as the data it has. Ensuring your agents have the right business context is critical for accurate testing.

- Adopt Parallel Workflows: Move away from sequential “dev-then-test” thinking. Prepare teams to manage multiple asynchronous agents working in tandem.

- Invest in “Human-in-the-Loop” Excellence: Shift the role of the tester from manual execution to “Agent Manager,” focusing on high-level quality strategy and system-wide insights.

Where This Is Heading

The Agentic SDLC changes more than how software is built—it changes how it’s validated. As AI accelerates development and connects workflows, testing becomes a continuous, system-level function, not a final checkpoint.

Autonomous testing is how teams keep that system in balance.

Because in a world where software is generated faster than ever, the real advantage isn’t speed. It’s knowing what you can trust.

Quick Answers

Autonomous testing refers to the use of AI to automate testing activities, increasingly as part of connected workflows across development and delivery.

As AI systems expand across the lifecycle, testing becomes part of these connected workflows. Autonomous testing is one way testing evolves within that system.

The Agentic SDLC refers to a shift from isolated AI tools to coordinated AI agents working across the software development lifecycle. Instead of assisting in individual tasks, these systems collaborate across planning, coding, testing, and delivery to enable more continuous and automated workflows.

Autonomous testing platforms help connect testing into the broader AI-driven workflow. They can generate tests, execute them reliably, and adapt to changes as code evolves. In an agentic model, these platforms act as the validation layer—ensuring that continuous development is matched by continuous, trustworthy testing.