Eric Terry has spent years leading engineering teams through the real-world tradeoffs between speed, cost, and quality. As an Applitools Ambassador, he brings a practical perspective shaped by teams that don’t have the luxury of optimizing for just one dimension.

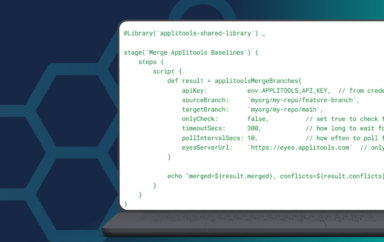

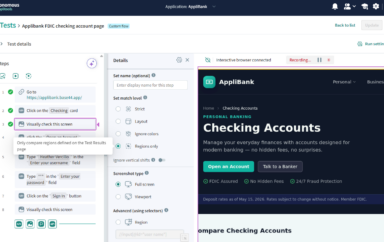

In a talk last year, Eric outlined how leading teams are increasingly adopting a hybrid approach to testing—balancing code-driven rigor with no-code flexibility to move faster without sacrificing confidence. That same tension shows up in how teams think about delivery tradeoffs more broadly.

The post below captures a core idea many teams still struggle with: you don’t get cheap, fast, and high-quality at the same time. The real question is which tradeoff you’re making—and whether you’re making it deliberately.

This article was originally published by Eric Terry on LinkedIn. It has been lightly adapted and republished with permission. You can read the original post here.

Eric expands on this approach in more detail in this session on how top teams combine code-driven and no-code testing approaches.

There is a common saying in the world of production, “You can have two of three options: cheap, fast, and good.” Does that hold true in a world where Artificial Intelligence, AI, has become prolific? The site There’s An AI For That boasts an impressive list of 46,346 AI tools for 11,373 tasks and 5,194 jobs as of February 23, 2026. A figure that underscores just how rapidly the landscape is expanding.

There is no doubt that AI will accelerate the “fast” and “cheap” aspects of work. The old adage is, “you get what you pay for.” This article will explore three key areas to keep in mind while putting together the next $1T idea: AI supercharges the fast and cheap, humans need to be involved, and this is a tool and not a replacement. That last point may not sit well with a lot of c-suite and board members. Just remember, Claude and Gemini have access to board meeting notes and emails too.

Insight 1: AI Supercharges “Fast” and “Cheap”

It is undeniable that one of the most immediate and tangible benefits of AI is its ability to dramatically increase speed and efficiency. This should increase productivity and lead to significant cost savings.

The promise of accelerated productivity is real. AI excels at handling repetitive, time-consuming tasks at a speed far beyond human capabilities. This includes processing thousands of documents in minutes, analyzing complex datasets in real-time, and generating initial drafts of reports or code.

One might argue that this was already possible with low-level C and Assembly programming. Correct, but even the most hard-core software engineer can attest to the power of assisted coding with the use of generative AI tools. Research has shown these tools can complete certain coding tasks up to twice as fast as traditional methods. The part left out of the glossy sales pitch? AI is messy when it comes to complex engineering tasks. Humans, for the near future, will need to be in the loop of all content generated with the aid of AI.

Remember, AI is a tool to enhance the workforce, not replace it. Automation is a tool used to help reduce repetitive manual tasks. Automation already increases operational efficiency. Using the two in tandem can lead to substantial savings. This coupling can be referred to as Agentic AI, which leverages the autonomy of automation and the productivity of AI.

A more tangible example is in the field of arbitration. The use of an “AI Arbitrator” agent is projected to lead to 20–25% faster resolution times and cost savings of 35%.

Businesses that embrace these types of tools with an eye towards scaling up will solve the riddle of how to do more with less. AI-powered systems, like chatbots and AI agents, can handle a massive volume of interactions simultaneously, freeing up human agents to focus on more complex, high-value issues. Again, noting the need for humans to remain as the apex business resource.

Insight 2: The Human-in-the-Loop: The Guardian of “Good”

The next insight expands on the first. While AI is powerful, it has significant limitations that make human oversight essential for quality, ethical considerations, and true innovation. This is often referred to as a “Human-in-the-Loop” (HITL) system.

AI models struggle with ambiguity, bias, and edge cases that fall outside their training data. These tools are fantastic at their intended goal: creating plausible content. The problem is they are not good at validating that content. This leads to “hallucinations”, confident but incorrect outputs.

Imagine the embarrassment that comes from pushing content that has not been validated. Perhaps Air Canada’s misfortune can be a cautionary tale. The airline’s chatbot promised a bereavement discount for a traveler after they booked the flight, only for a human agent to later inform them that the policy required submission before booking. The airline refused to honor the fabricated policy. Adding to their troubles, the airline then attempted to claim that the chatbot was a “separate legal entity that is responsible for its own actions.” A HITL most likely would have resulted in a better customer outcome and less legal exposure, not to mention less reputational damage.

Another critical role for humans is to ensure that AI systems operate ethically. They need to identify and address biases that may be present in the data or algorithms. The Large Language Models (LLMs) that power most AI systems are trained on enormous amounts of images, text, and sound. The poor quality of some of those inputs, along with a lack of diverse voices, can lead to unsavory outcomes.

AI also lacks true creativity and emotional intelligence. AI generates content by remixing and repurposing existing data; it lacks the genuine creativity, emotional depth, and personal experience that are the hallmarks of human insight and intelligence. Over-reliance on AI can even lead to a homogenization of ideas, an anti-pattern when it comes to creating content that resonates with a human audience.

Insight 3: The Power of Partnership: Augmenting, Not Replacing

The last and most important insight is this: AI should not be viewed as the magic bullet that will reduce the workforce. Effective use of AI is not a replacement for human intelligence. It is a tool, a partner, that augments human capabilities. Companies that understand this will be far ahead of their competitors. In fact, nearly half of companies abandon their AI initiatives, and it has nothing to do with the technology, but everything to do with the approach.

This same principle is playing out in modern testing strategies, where teams combine code-driven and no-code approaches to balance speed with reliability.

Get the idea of cheap or free labor out of your head. Instead, focus on workflow transformation. Use AI to train and assist more junior-level employees. Utilize your top talent to be the Human-in-the-Loop. Create a continuous feedback loop where humans provide input and corrections that help to train and refine AI models, making them more accurate and reliable over time. However, the goal is not just to build “perfect” algorithms, it is to create systems that are built around human guidance and interaction.

Implementing AI really delivers the best of both worlds. It’s a collaborative approach that combines the efficiency and processing power of AI with the precision, nuance, and ethical reasoning of human oversight.

Conclusion

AI is here to stay. It is set to revolutionize the “fast” and “cheap,” but the “good” will continue to be the domain of human judgment, creativity, and oversight. The future of high-quality work lies in the powerful synergy between humans and AI.

There is, however, a dark side to all of this that deserves far more attention than it currently receives. We seem to have forgotten our custodial responsibilities. As a kid who watched my fair share of Captain Planet, this does not sit well with me.

The environmental impact of AI is substantial and growing. Data centers already account for a significant share of global electricity consumption, and that demand is rising sharply as AI workloads scale. Beyond electricity, large-scale AI infrastructure requires enormous quantities of fresh water for cooling, a resource that is increasingly scarce in many parts of the world. According to MIT, training and running large AI models carries a measurable carbon footprint that the industry has yet to adequately account for. These are not hypothetical concerns. They are present-day costs being paid by ecosystems and communities that have no seat at the table when technology investment decisions are made.

History and literature are full of examples of “just because you can, doesn’t mean you should.” History will place an asterisk by the names of Bezos, Gates, and Zuckerberg. Did they fly too close to the sun? Or did they bring us all closer to the dystopian visions of Waterworld or Mad Max: Fury Road?

AI is cool and exciting right now. Don’t take your eye off the adverse effects its overuse has on our habitat. Instead, lean into your inner Captain Planet, because “the power is yours!”

Quality Leader | Applitools Ambassador | Tinkerer & Gamer at Heart | Improving Software Quality & User Experience

Eric Terry is a seasoned, results-driven professional with a passion for excellence and a proven track record in Enterprise Application Development, Business Analysis, Team Management, and more. He is currently the Senior Director of Digital Content Production at EVERSANA INTOUCH, a leading global agency network in the life sciences industry. Connect with him on LinkedIn.

References

Alheraki, A. (2025, September 2). A professional Roadmap to Mastering C in Low-Level and Systems Programming. https://www.linkedin.com/pulse/professional-roadmap-mastering-c-low-level-systems-ayman-alheraki-5nzuf/

Captain Planet and the Planeteers (TV Series 1990–1996) – Quotes – IMDB. (n.d.). IMDb. https://www.imdb.com/title/tt0098763/quotes/?item=qt0411808

Coulter, D., & Coulter, D. (2025, June 7). AI vs Automation: What’s the Difference, and When Should You Use Each? Daniel Coulter. https://danielcoulter.com/posts/ai-vs-automation-whats-the-difference-and-when-should-you-use-each

Dfapa, G. H. B. M. (2026, February 21). Going down the rabbit hole of AI sycophancy can leave you fried. Psychology Today.

Eeles, M. (2025, May 30). The AI reality check: why 42% of companies are abandoning their AI projects, and how to be in the 58% that succeed. LinkedIn.

Embry, S. (2025, October 7). The AI Arbitrator Is Here: What’s Next? Above the Law.

Explained: Generative AI’s environmental impact. (2025, January 17). MIT News | Massachusetts Institute of Technology.

Gajre, S. (2025, March 25). From Hours to Minutes—How AI is Rapidly Advancing in Complex Task Automation. LinkedIn.

Holdsworth, J. (2025, November 17). AI bias. What Is AI Bias? IBM.

MIT Sloan Teaching & Learning Technologies. (2025, June 30). When AI Gets It Wrong: Addressing AI Hallucinations and Bias.

Nwachukwu, C. [@promisevector]. (2023, June 18). Low-level Programming: Harnessing the Power of C. Medium.

Staff, C. (2025, June 8). Automation vs. AI: Meaning, Differences, and Real World Uses. Coursera.

Stryker, C. (2025, December 11). Agentic AI. What is Agentic AI? IBM.

Yagoda, M. (2024, February 23). Airline held liable for its chatbot giving passenger bad advice – what this means for travellers. BBC Travel.