TL;DR

• Generative AI is highly effective for creating tests, data, and analysis, but execution has different requirements.

• Test execution demands repeatability, determinism, and explainable failures.

• Probabilistic systems, including LLMs, introduce variability that leads to flaky tests and loss of trust.

• Teams that separate where generative AI helps from where deterministic execution is required scale testing more reliably.

Generative AI has dramatically changed how teams create tests. Requirements can be translated into test cases in seconds. Automation scripts can be bootstrapped with natural language. Test data can be generated on demand.

But many teams are discovering an uncomfortable truth: faster test creation does not automatically lead to more reliable releases.

Execution is where confidence is earned or lost. And test execution demands guarantees that generative AI—including large language models (LLMs)—was never designed to provide.

Where generative AI fits well in testing

Generative AI excels in parts of the testing lifecycle that tolerate variation. These are areas where approximation is acceptable and speed matters more than precision.

Teams are successfully using AI to:

- Generate test cases from requirements

- Assist with unit and integration test authoring

- Create realistic and varied test data

- Summarize test results and surface patterns

In most of these cases, teams are relying on LLMs to generate intent, not to make final execution or release decisions.

These use cases benefit from flexibility. Minor differences in output rarely introduce risk, and human review is often part of the workflow.

The challenge emerges when that same probabilistic behavior is extended into execution.

Why test execution is fundamentally different

Test execution is not a creative task. It is a verification task.

Execution requires:

- The same test to behave the same way, run after run

- Assertions that are precise and stable

- Failures that can be reproduced and diagnosed

- Outcomes that can be explained clearly to stakeholders

Generative AI systems—particularly LLMs—are probabilistic by design. That variability is useful for exploration and generation, but it works against the repeatability and determinism execution depends on.

As AI accelerates development, repeatability becomes more important than intelligence in test execution.

How probabilistic execution creates real problems

When probabilistic systems are used to drive execution, teams often encounter the same failure modes:

- Tests that pass one run and fail the next without code changes

- Assertions that subtly change or disappear

- Longer debugging cycles because failures can’t be reproduced

- Rising compute costs from repeated executions

- Engineers losing confidence in automation results

When failures aren’t repeatable, teams stop trusting their tests—and that’s when automation becomes a bottleneck instead of a benefit.

– Shaping Your 2026 Testing Strategy

Once trust erodes, teams compensate. Manual validation creeps back in. Releases slow down. Automation becomes something teams work around rather than rely on.

Execution amplifies risk: security, governance, and explainability

Execution is also where risk concentrates.

When AI systems drive test execution, they may:

- Send application context externally

- Make decisions that can’t be fully explained

- Produce outcomes that are difficult to audit

These concerns are most visible in regulated and high-risk environments, but they apply broadly. Any team responsible for production releases needs to be able to explain why a test failed—or why a release was approved.

Reliable execution is not just a technical concern. It’s a governance concern.

Why deterministic execution matters at scale

Deterministic systems behave predictably. Given the same inputs, they produce the same outcomes.

In test execution, this enables:

- Reliable failure reproduction

- Faster root cause analysis

- Lower maintenance overhead

- Clear audit trails

- Reduced noise in pipelines

What test execution demands is not intelligence, but guarantees: the same inputs producing the same outcomes, every time.

Reliable test execution depends on determinism, not creativity.

Rethinking AI’s role in execution

The goal is not to abandon generative AI. It’s to use it where it fits.

Effective teams are separating responsibilities:

- Generative AI for creation, exploration, and analysis

- Deterministic systems for execution and verification

This separation allows teams to move quickly without sacrificing confidence.

What this means for engineering and QE teams

As AI becomes more deeply embedded in testing workflows, the key decision is no longer whether to use AI—but where.

Teams that succeed will:

- Accept variability where it’s safe

- Demand determinism where decisions are made

- Measure success by signal quality, not test count

- Optimize for trust before speed

The biggest risk in AI-driven testing isn’t lack of automation—it’s lack of trust.

Choosing confidence over convenience

Generative AI has changed how tests are created. It should not change the standards by which tests are trusted.

Execution is where reliability matters most. Teams that recognize this distinction will scale testing with confidence, even as AI continues to reshape software development.

Watch Shaping Your 2026 Testing Strategy now.

Quick Answers

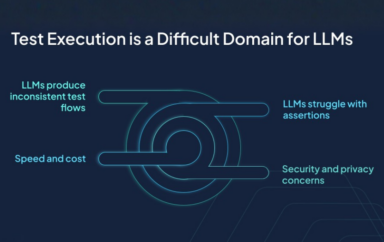

Generative AI systems, including LLMs, are probabilistic by design. This variability leads to inconsistent execution flows, unstable assertions, and failures that are difficult to reproduce.

No. Generative AI is highly effective for test creation, data generation, and analysis. Problems arise when it is used to drive execution and release decisions.

Deterministic test execution produces consistent results given the same inputs, enabling repeatable failures, faster debugging, and greater trust in automation.

Test creation accelerates coverage, but execution determines confidence. Reliable releases depend on predictable, explainable test outcomes.

Use generative AI and LLMs where flexibility is helpful, and deterministic systems where verification and decision-making require guarantees.